Real-Time Pricing Intelligence with AI Agents

Move from static pricing to real-time, AI agent-driven optimization. Learn architecture, data, methods, and governance to lift revenue and margin in 2026.

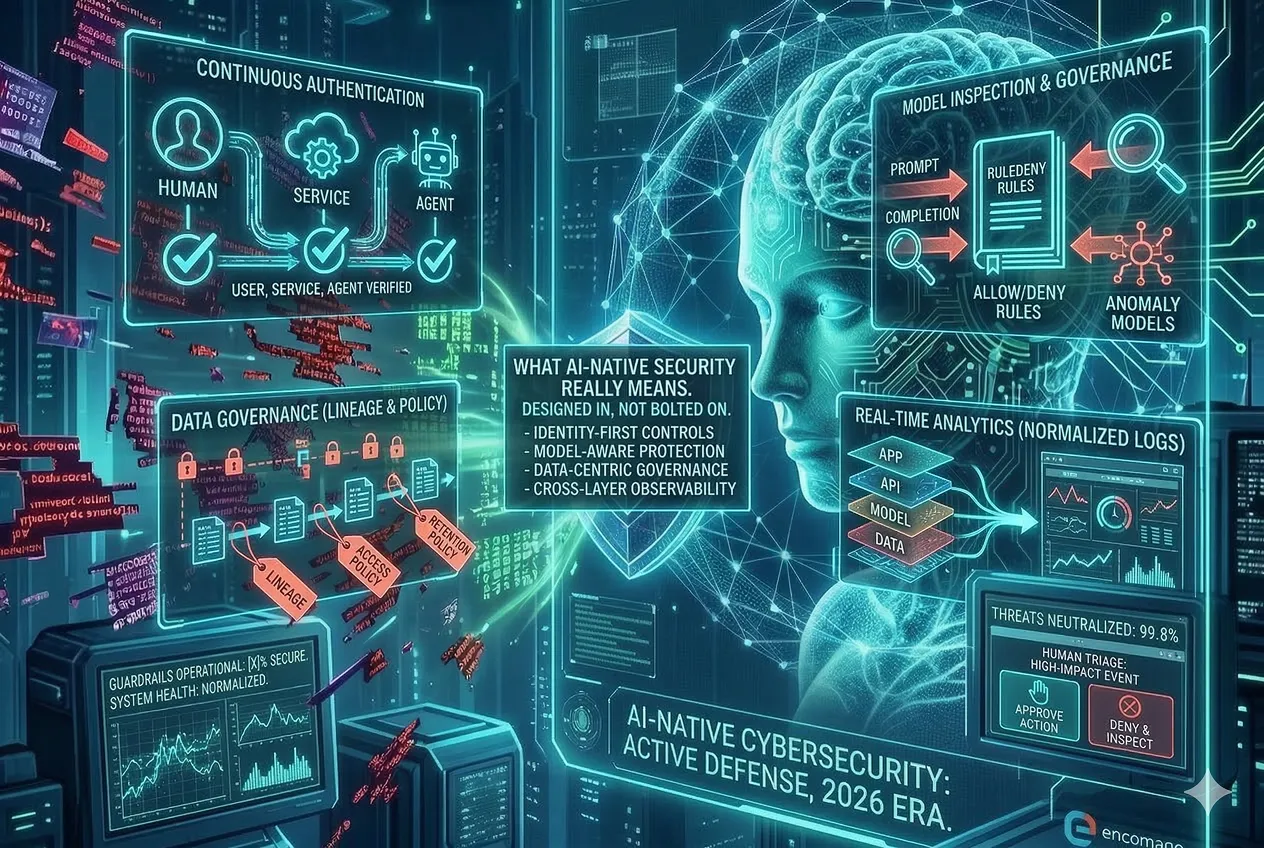

Automated attackers are no longer theory. In 2026, low-cost AI agents probe APIs, write convincing phishing at scale, and adapt faster than human teams can react. To stay resilient, B2B organizations need AI-native cybersecurity: defenses designed for autonomy, speed, and scale. This post breaks down the threat model, the architecture that holds up under pressure, and a pragmatic roadmap you can start today.

Offense has gone autonomous. Attackers use large language models (LLMs) and scripted agents to chain reconnaissance, exploit testing, and data exfiltration without pause. Social engineering is supercharged by voice and video synthesis. API and identity abuse dominate entry points. Your defenses must assume scale, persistence, and rapid iteration.

The implication is simple: controls built for occasional, manual attacks will buckle under automated pressure. You need defenses that adapt as fast as attacks evolve.

AI-native security is not bolting a scanner onto an AI app. It means designing your product, data, and operations so detection, response, and guardrails are first-class. It blends deterministic rules (allow and deny) with probabilistic detection (anomaly models) and keeps a human in the loop for high-impact decisions.

Done well, AI augments your analysts, triage, and incident response. It shortens the gap between signal and action.

Start with Zero Trust (never trust, always verify) and least privilege. Treat identity as the control plane, not the network. Then layer controls that contain blast radius when—not if—automation slips past a boundary.

Architecture is only half the story. Make it operable: version policies, simulate changes, and recover quickly. Resilience is the product of design and disciplined runbooks.

Automated adversaries exploit scale and speed. Counter with friction where it counts and signals that help you pick out bots from humans.

These controls buy you time and telemetry. Both matter when attacks iterate thousands of times per hour.

AI systems widen the attack surface. Treat model inputs, retrieval layers, and training data as high-value assets.

Model safety is a lifecycle, not a launch task. Bake these controls into CI/CD and retraining pipelines.

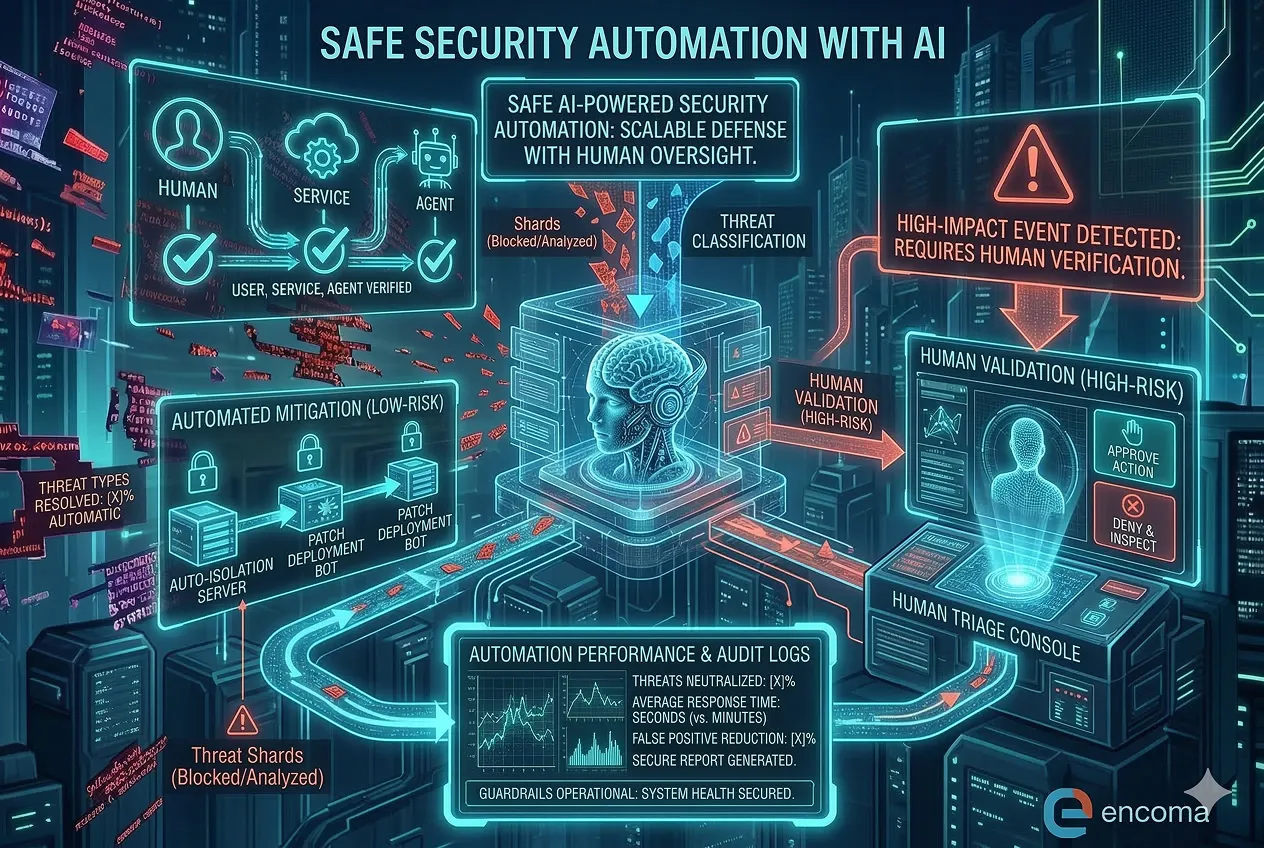

Automation cuts response times, but safety comes first. Use AI as a tier-1 analyst and a co-pilot for remediation, not an unsupervised operator.

When automation is predictable and observable, you can scale it without fear—and reclaim analyst time for higher-value work.

Security leaders win support when they show business impact. Track metrics that map to risk reduction and operating efficiency.

Instrument from baseline to production. Make improvements visible to your board and customers.

You do not need a big-bang overhaul. Sequence wins so value shows up early while the platform matures.

Governance glues it together: cross-functional ownership across security, data, and operations; clear SLAs; and regular post-incident learning.

Let’s build something powerful together - with AI and strategy.

.avif)